Fluid Lensing System for Imaging Underwater Environments

optics

Fluid Lensing System for Imaging Underwater Environments (TOP2-284)

Next-generation sensing technologies for seeing through waves to explore ocean worlds

Overview

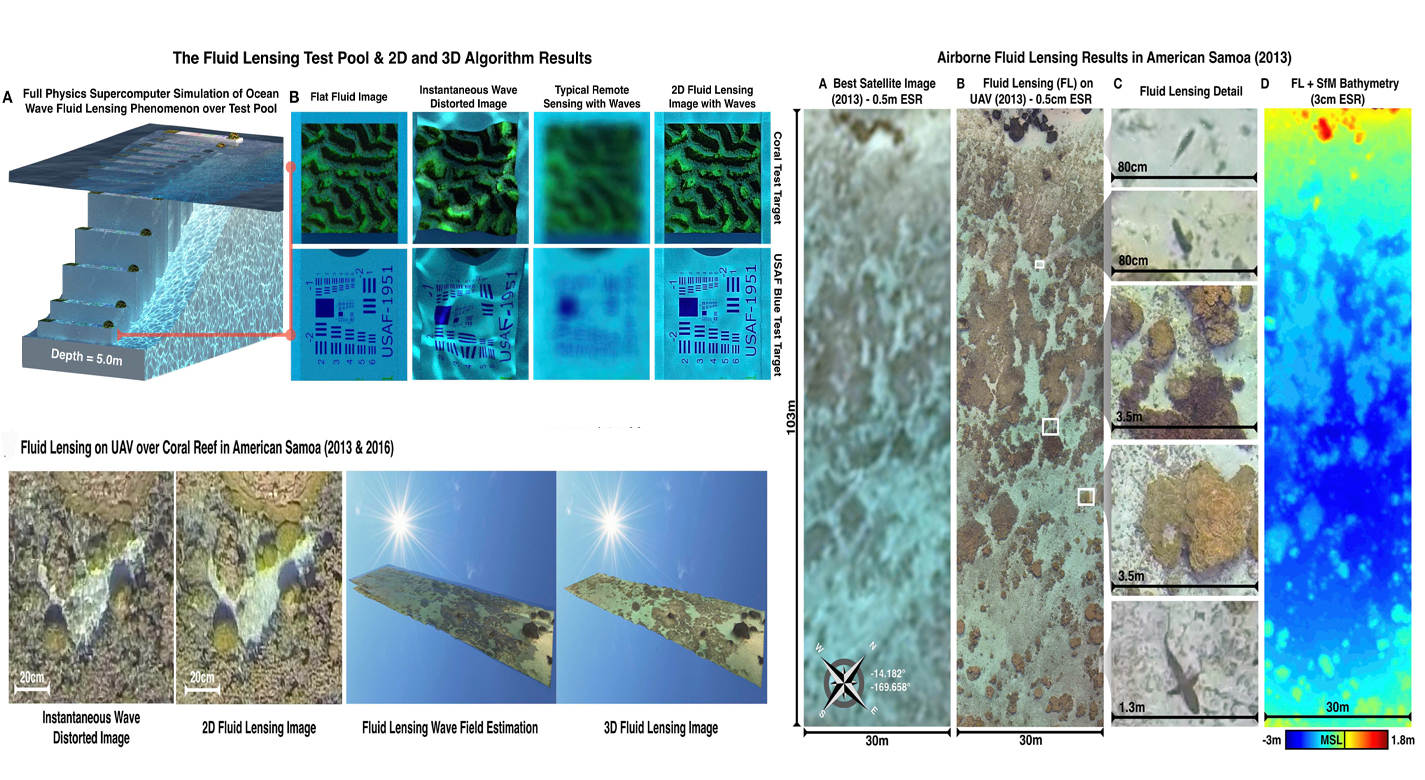

The optical interaction of light with fluids and aquatic surfaces is a complex phenomenon. At present, no remote sensing technologies can robustly image underwater objects at the cm-scale or finer due to surface wave distortion and the strong attenuation of light in the water column. As a consequence, our ability to accurately assess the status and health of shallow marine ecosystems, such as coral and stromatolite reefs, is severely impaired. NASA Ames has developed a first of its kind remote sensing technology capable of imaging through ocean waves in 3D at sub-cm resolutions. The patented breakthrough Fluid Lensing technology leverages optofluidic interactions, computational imaging, and fluid models to remove optical distortions and significantly enhance the angular resolution of an otherwise underpowered optical system.

The Technology

Fluid lensing exploits optofluidic lensing effects in two-fluid interfaces. When used in such interfaces, like air and water, coupled with computational imaging and a unique computer vision-processing pipeline, it not only removes strong optical distortions along the line of sight, but also significantly enhances the angular resolution and signal-to-noise ratio of an otherwise underpowered optical system. As high-frame-rate multi-spectral data are captured, fluid lensing software processes the data onboard and outputs a distortion-free 3D image of the benthic surface. This includes accounting for the way an object can look magnified or appear smaller than usual, depending on the shape of the wave passing over it, and for the increased brightness caused by caustics. By running complex calculations, the algorithm at the heart of fluid lensing technology is largely able to correct for these troublesome effects. The process introduces a fluid distortion characterization methodology, caustic bathymetry concepts, Fluid Lensing Lenslet Homography technique, and a 3D Airborne Fluid Lensing Algorithm as novel approaches for characterizing the aquatic surface wave field, modeling bathymetry using caustic phenomena, and robust high-resolution aquatic remote sensing. The formation of caustics by refractive lenslets is an important concept in the fluid lensing algorithm. The use of fluid lensing technology on drones is a novel means for 3D imaging of aquatic ecosystems from above the water's surface at the centimeter scale. Fluid lensing data are captured from low-altitude, cost-effective electric drones to achieve multi-spectral imagery and bathymetry models at the centimeter scale over regional areas. In addition, this breakthrough technology is developed for future in-space validation for remote sensing of shallow marine ecosystems from Low Earth Orbit (LEO). NASA's FluidCam instrument, developed for airborne and spaceborne remote sensing of aquatic systems, is a high-performance multi-spectral computational camera using Fluid lensing. The space-capable FluidCam instrument is robust and sturdy enough to collect data while mounted on an aircraft (including drones) over water.

Benefits

- Addresses the surface wavedistortion and optical absorption challenges posed by aquatic remote sensing

- Exploits surface waves as magnifying optical lensing elements, or fluid lensing lenslets, to enhance the spatial resolution and signal-to-noise properties of remote sensing instruments

- Enables robust imaging (at sub-cm-scale spatial resolutions) of underwater objects through refractive distortions from surface waves at sub-cm-scales

- Estimates fluid dynamics and optical coupling, compute image with enhanced angular resolution

- Processes high-frame-rate multi-spectral imagery with fluid distortion characterization and caustic Bathymetry Fluid Lensing algorithm

- Tested in a number of different environments for verification and proof of concept

- 3D Fluid Lensing Algorithm validated on FluidCam from aircraft at multiple altitudes in real-world aquatic systems at depths up to 10m

- Custom-designed and developed for airborne science and packaged into a 1.5U CubeSat form with space capable components and design

Applications

- Marine industry

- Remote sensing missions

- sUAS-based science missions

- Science-based airborne and space-borne remote sensing

- Submerged asset imaging

- Marine debris

Technology Details

optics

TOP2-284

ARC-17922-1

U.S. Patent Application Publication No. 2019/0266712

https://www.nasa.gov/ames/las/fluidcam-suas-imaging-system

Chirayath, Ved, and Alan Li. "Next-Generation Optical Sensing Technologies for Exploring Ocean Worlds-NASA FluidCam, MiDAR, and NeMO-Net." Frontiers in Marine Science 6 (2019): 521. https://doi.org/10.3389/fmars.2019.00521

https://www.nasa.gov/ames/las/fluidcam-suas-imaging-system

Chirayath, Ved, and Alan Li. "Next-Generation Optical Sensing Technologies for Exploring Ocean Worlds-NASA FluidCam, MiDAR, and NeMO-Net." Frontiers in Marine Science 6 (2019): 521. https://doi.org/10.3389/fmars.2019.00521

|

Tags:

|

Similar Results

Multispectral Imaging, Detection, and Active Reflectance (MiDAR)

The MiDAR transmitter emits coded narrowband structured illumination to generate high-frame-rate multispectral video, perform real-time radiometric calibration, and provide a high-bandwidth simplex optical data-link under a range of ambient irradiance conditions, including darkness. A theoretical framework, based on unique color band signatures, is developed for multispectral video reconstruction and optical communications algorithms used on MiDAR transmitters and receivers. Experimental tests demonstrate a 7-channel MiDAR prototype consisting of an active array of multispectral high-intensity light-emitting diodes (MiDAR transmitter) coupled with a state-of-the-art, high-frame-rate NIR computational imager, the NASA FluidCam NIR, which functions as a MiDAR receiver. A 32-channel instrument is currently in development.

Preliminary results confirm efficient, radiometrically-calibrated, high signal-to-noise ratio (SNR) active multispectral imaging in 7 channels from 405-940 nm at 2048x2048 pixels and 30 Hz. These results demonstrate a cost-effective and adaptive sensing modality, with the ability to change color bands and relative intensities in real-time, in response to changing science requirements or dynamic scenes. Potential applications of MiDAR include high-resolution nocturnal and diurnal multispectral imaging from air, space and underwater environments as well as long- distance optical communication, bidirectional reflectance distribution function characterization, mineral identification, atmospheric correction, UV/fluorescent imaging, 3D reconstruction using Structure from Motion (SfM), and underwater imaging using Fluid Lensing. Multipurpose sensors, such as MiDAR, which fuse active sensing and communications capabilities, may be particularly well-suited for mass-limited robotic exploration of Earth and the solar system and represent a possible new generation of instruments for active optical remote sensing.

Computational Visual Servo

The innovation improves upon the performance of passive automatic enhancement of digital images. Specifically, the image enhancement process is improved in terms of resulting contrast, lightness, and sharpness over the prior art of automatic processing methods. The innovation brings the technique of active measurement and control to bear upon the basic problem of enhancing the digital image by defining absolute measures of visual contrast, lightness, and sharpness. This is accomplished by automatically applying the type and degree of enhancement needed based on automated image analysis.

The foundation of the processing scheme is the flow of digital images through a feedback loop whose stages include visual measurement computation and servo-controlled enhancement effect. The cycle is repeated until the servo achieves acceptable scores for the visual measures or reaches a decision that it has enhanced as much as is possible or advantageous. The servo-control will bypass images that it determines need no enhancement.

The system determines experimentally how much absolute degrees of sharpening can be applied before encountering detrimental sharpening artifacts. The latter decisions are stop decisions that are controlled by further contrast or light enhancement, producing unacceptable levels of saturation, signal clipping, and sharpness.

The invention was developed to provide completely new capabilities for exceeding pilot visual performance by clarifying turbid, low-light level, and extremely hazy images automatically for pilot view on heads-up or heads-down display during critical flight maneuvers.

Grid-Oriented Normalization for Analysis of Spherical Areas (GONASA)

NASA's GONASA technology is a mathematical formula / algorithm built around creating a grid composed of equal-area cells that span the entire visible hemisphere of a spherical object. Traditional longitude and latitude grids produce cells that diminish in size toward the poles due to convergence of longitudinal lines. GONASA circumvents this problem by carefully adjusting the latitude increments, resulting in a network of truly equal-area cells. This adjustment ensures that any feature observed on the spherical surface is accurately represented, regardless of its location.

To implement GONASA, the spherical surface is first segmented into discrete latitude bands or rings, each chosen to encompass an identical surface area. Within each ring, longitude divisions maintain equal cell areas, creating a uniform Cartesian grid. The result is a consistent, distortion-corrected matrix suitable for automatic computation, enabling simplified, efficient, and accurate measurements of spatial characteristics such as feature area, centroid location, perimeter, compactness, orientation, and aspect ratio.

GONASA grids are computationally efficient and readily adaptable to a range of data processing workflows, from spreadsheets to sophisticated data analysis frameworks like Pandas data frames in Python. Due to their consistent cell sizing and straightforward indexing, GONASA grids facilitate automation, enabling rapid, high-volume data processing and analysis, essential for modern remote sensing and planetary missions that require immediate, reliable data analysis in limited-bandwidth communications environments. At NASA, GONASA has already been successfully implemented to study images of Titan (e.g., mapping its clouds) taken by the Cassini space probe.

Reflection-Reducing Imaging System for Machine Vision Applications

NASAs imaging system is comprised of a small CMOS camera fitted with a C-mount lens affixed to a 3D-printed mount. Light from the high-intensity LED is passed through a lens that both diffuses and collimates the LED output, and this light is coupled onto the cameras optical axis using a 50:50 beam-splitting prism.

Use of the collimating/diffusing lens to condition the LED output provides for an illumination source that is of similar diameter to the cameras imaging lens. This is the feature that reduces or eliminates shadows that would otherwise be projected onto the subject plane as a result of refractive index variations in the imaged volume. By coupling the light from the LED unit onto the cameras optical axis, reflections from windows which are often present in wind tunnel facilities to allow for direct views of a test section can be minimized or eliminated when the camera is placed at a small angle of incidence relative to the windows surface. This effect is demonstrated in the image on the bottom left of the page.

Eight imaging systems were fabricated and used for capturing background oriented schlieren (BOS) measurements of flow from a heat gun in the 11-by-11-foot test section of the NASA Ames Unitary Plan Wind Tunnel (see test setup on right). Two additional camera systems (not pictured) captured photogrammetry measurements.

Assembly for Simplified Hi-Res Flow Visualization

NASAs single grid, self-aligned focusing schlieren optical assembly is attached to a commercial-off-the-shelf camera. It directs light from the light source through a condenser lens and linear polarizer towards a polarizing beam-splitter where the linear, vertically-polarized component of light is reflected onto the optical axis of the instrument. The light passes through a Ronchi ruling grid, a polarizing prism, and a quarter-wave plate prior to projection from the assembly as right-circularly polarized light. The grid-patterned light (having passed through the Ronchi grid) is directed past the density object onto a retroreflective background that serves as the source grid. Upon reflection off the retroreflective background, the polarization state of light is mirrored. It passes the density object a second time and is then reimaged by the system. Upon encountering the polarizing prism the second time, the light is refracted resulting in a slight offset. This refracted light passes through the Ronchi ruling grid, now serving as the cutoff grid, for a second time before being imaged by the camera.

Both small- and large-scale experimental set ups have been evaluated and shown to be capable of fields-of-view of 10 and 300 millimeters respectively. Observed depths of field were found to be comparable to existing systems. Light sources, polarizing prisms, retroreflective materials and lenses can be customized to suit a particular experiment. For example, with a high speed camera and laser light source, the system has collected flow images at a rate of 1MHz.