Search

PATENT PORTFOLIO

Information Technology and Software

NASA develops information technology and software to support a wide range of activities, including mission planning and operations, data analysis and visualization, and communication and collaboration. These technologies also play a key role in the development of advanced scientific instruments and spacecraft, as well as in the management of NASA's complex organizational and technical systems. By leveraging NASA's advances in IT and software, your business can gain a competitive edge.

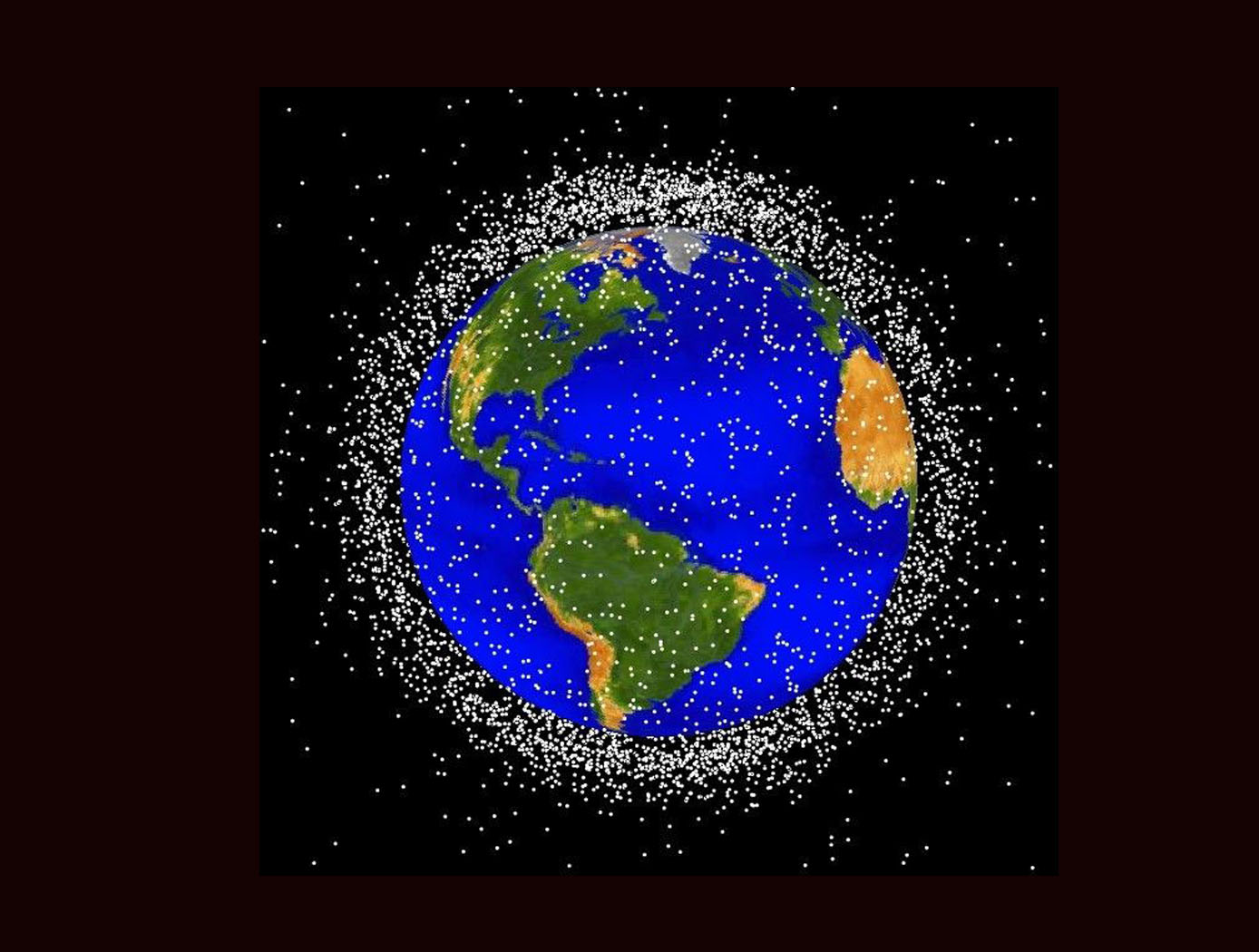

Space Traffic Management (STM) Architecture

As ever larger numbers of spacecraft seek to make use of Earth's limited orbital volume in increasingly dense orbital regimes, greater coordination becomes necessary to ensure these spacecraft are able to operate safely while avoiding physical collisions, radio-frequency interference, and other hazards. While efforts to date have focused on improving Space Situational Awareness (SSA) and enabling operator to operator coordination, there is growing recognition that a broader system for Space Traffic Management (STM) is necessary. The STM architecture forms the framework for an STM ecosystem, which enables the addition of third parties that can identify and fill niches by providing new, useful services. By making the STM functions available as services, the architecture reduces the amount of expertise that must be available internally within a particular organization, thereby reducing the barriers to operating in space and providing participants with the information necessary to behave responsibly. Operational support for collision avoidance, separation, etc., is managed through a decentralized architecture, rather than via a single centralized government-administered system.

The STM system is based on the use of standardized Application Programming Interfaces (API) to allow easier interconnection and conceptual definition of roles to more easily allow suppliers with different capabilities to add value to the ecosystem. The architecture handles basic functions including registration, discovery, authentication of participants, and auditable tracking of data provenance and integrity. The technology is able to integrate data from multiple sources.

Computer Vision Lends Precision to Robotic Grappling

The goal of this computer vision software is to take the guesswork out of grapple operations aboard the ISS by providing a robotic arm operator with real-time pose estimation of the grapple fixtures relative to the robotic arm’s end effectors. To solve this Perspective-n-Point challenge, the software uses computer vision algorithms to determine alignment solutions between the position of the camera eyepoint with the position of the end effector – as the borescope camera sensors are typically located several centimeters from their respective end effector grasping mechanisms.

The software includes a machine learning component that uses a trained Region-based Convolutional Neural Network (R-CNN) to provide the capability to analyze a live camera feed to determine ISS fixture targets a robotic arm operator can interact with on orbit. This feature is intended to increase the grappling operational range of ISS’s main robotic arm from a previous maximum of 0.5 meters for certain target types, to greater than 1.5 meters, while significantly reducing computation times for grasping operations.

Industrial automation and robotics applications that rely on computer vision solutions may find value in this software’s capabilities. A wide range of emerging terrestrial robotic applications, outside of controlled environments, may also find value in the dynamic object recognition and state determination capabilities of this technology as successfully demonstrated by NASA on-orbit.

This computer vision software is at a technology readiness level (TRL) 6, (system/sub-system model or prototype demonstration in an operational environment.), and the software is now available to license. Please note that NASA does not manufacture products itself for commercial sale.

Traffic Aware Strategic Aircrew Requests (TASAR)

The NASA software application developed under the TASAR project is called the Traffic Aware Planner (TAP). TAP automatically monitors for flight optimization opportunities in the form of lateral and/or vertical trajectory changes. Surveillance data of nearby aircraft, using ADS-B IN technology, are processed to evaluate and avoid possible conflicts resulting from requested changes in the trajectory. TAP also leverages real-time connectivity to external information sources, if available, of operational data relating to winds, weather, restricted airspace, etc., to produce the most acceptable and beneficial trajectory-change solutions available at the time. The software application is designed for installation on low-cost Electronic Flight Bags that provide read-only access to avionics data. The user interface is also compatible with the popular iPad. FAA certification and operational approval requirements are expected to be minimal for this non-safety-critical flight-efficiency application, reducing implementation cost and accelerating adoption by the airspace user community.

Awarded "2016 NASA Software of the Year"

Selective Access and Editing in a Database

A complex project has many tasks and sub-tasks, many phases and many collaborators, and will often have an associated database with many users, each of which has a limited need to know that does not extend to all information in the database. This invention relates to selective access to simultaneous editing of different portions of a database. It provides selective access to as many as 2N-1 (or 2N) mutually exclusive portions of a database by different subgroups of N users. One or more members of an access subgroup can edit a document or other information collection, to which the members have access simultaneously. In this system the database receives information from one or more information sources and is queried by a plurality of users. The database permits selective access of a given user to different portions of the database, depending upon the users identity and access permissions. Optionally, members of the same access subgroup can be assigned different numerical priorities in a queue so that, as between first and second users in the subgroup, draft editing of a document by the first user will subsequently be reviewed, declined, or either partly entered or wholly entered by the second user. User access to different portions of the database is initially determined when a user account is set up. User access can be subsequently changed according to the circumstances.

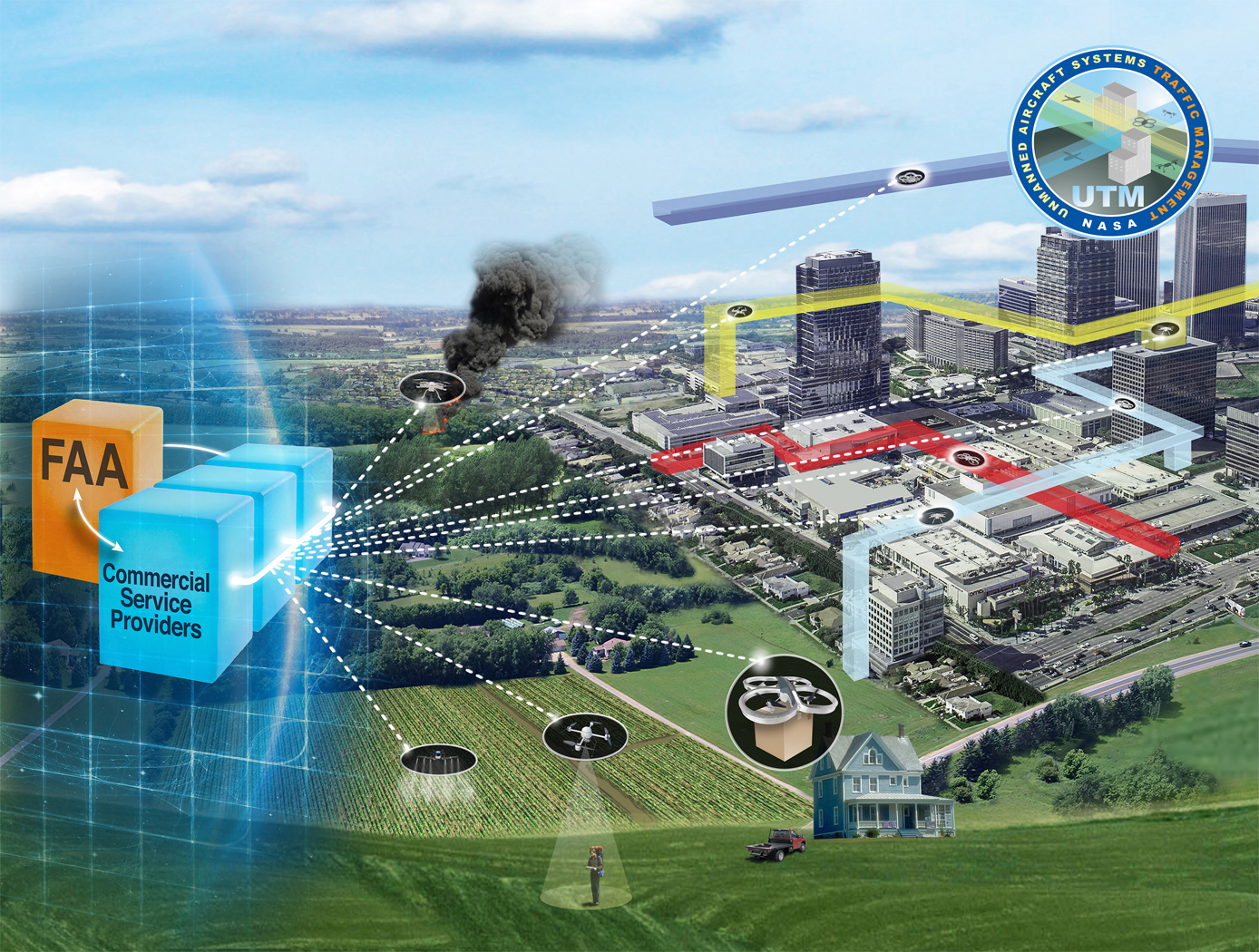

Near-Real Time Verification and Validation of Autonomous Flight Operations

NASA's Extensible Traffic Management (xTM) system allows for distributed management of the airspace where disparate entities collaborate to maintain a safe and accessible environment. This digital ecosystem relies on a common data generation and transfer framework enabled by well-defined data collection requirements, algorithms, protocols, and Application Programming Interfaces (APIs). The key components in this new paradigm are:

Data Standardization: Defines the list of data attributes/variables that are required to inform and safely perform the intended missions and operations.

Automated Real Time And/or Post-Flight Data Verification Process: Verifies system criteria, specifications, and data quality requirements using predefined, rule-based, or human-in-the-loop verification.

Autonomous Evolving Real Time And/or Post-Flight Data Validation Process: Validates data integrity, quantity, and quality for audit, oversight, and optimization.

The verification and validation process determines whether an operation’s performance, conformance, and compliance are within known variation. The technology can verify thousands of flight operations in near-real time or post flight in the span of a few minutes, depending on networking and computing capacity. In contrast, manual processing would have required hours, if not days, for a team of 2-3 experts to review an individual flight.

STELLA-1.2

The STELLA-1.2 base unit includes a microcontroller, GPS, BME280 (temperature, pressure, humidity), and rotary control with display. Sensor modules include:

• Spectrometer: 18-band VNIR from 410–940nm, UV, light, IR, lidar

• Air Quality: CO₂ (SCD-40), PM2.5/PM10 (PMSA003I), methane

• Expandability: More modules via magnetic couplers and CircuitPython menu additions

First Stage Bootloader

The First Stage Bootloader reads software images from flash memory, utilizing redundant copies of the images, and launches the operating system. This bootloader finds a valid copy of the OS image and the ram filesystem image in flash memory. If none of the copies are fully valid, the bootloader attempts to construct a fully valid image by validating the images in small sections and piecing together validated sections from multiple copies.

Periodically, throughout this process, the First Stage Bootloader restarts the watchdog timer.

The First Stage Bootloader reads a boot table from a default location in flash memory. This boot table describes where to find the OS image and its supporting ram filesystem image in flash. It uses header information and a checksum to validate the table. If the table is corrupt, it reads the next copy until it finds a valid table. There can be many copies of the table in flash, and all will be read if necessary. The First Stage Bootloader reads the ram filesystem image into memory and validates its contents. Similar to the boot table, if one copy of the image is corrupt, it will read the remaining copies until it finds one with a valid header. If it doesn't find a valid copy, it will break the image down into smaller portions. For each section, it checks each copy until it finds a valid copy of the section and copies the valid section into a new copy of the image. The First Stage Bootloader reads the OS image and interprets it. If anything in the image is corrupt, it reads the remaining copies until it finds a fully valid copy. If no copy is fully valid, it will use individual valid records from multiple copies to create a fully valid image.

Enhanced Project Management Tool

A searchable skill set module lists a name of each worker employed by the company and/or employed by one or more companies that contract services for the company, and a list of skills possessed by each such worker. When the system receives a description of a skill set that is needed for a project, the skill set module is queried. The name and relevant skill(s) possessed by each worker that has at least one skill set provided in the received skill set list is displayed in a visually perceptible format.

Provisions are provided for customizing and linking, where feasible, a subset of reports and accompanying illustrations for a particular user, and for adding or deleting other reports, as needed. This allows a user to focus on the 15 reports of immediate concern and to avoid sorting through reports and related information that is not of concern.

Implementation of this separate-storage option would allow most or all users who have review access to a document to write, edit, and otherwise modify the original version, by storing the modified version only in the users own memory space. Where a user who does not have at least review-access to a report explicitly requests that report, the system optionally informs this user of the lack of review access and recommends that the user contact the system administrator. The system optionallystores preceding versions of a present report for the preceding N periods for historical purposes. The comparative analysis includes an ability to retrieve and reformatnumerical data for a contemplated comparison.

Otoacoustic Protection In Biologically-Inspired Systems

This innovation is an autonomic method capable of transmitting a neutralizing data signal to counteract a potentially harmful signal. This otoacoustic component of an autonomic unit can render a potentially harmful incoming signal inert. For selfmanaging systems, the technology can offer a selfdefense capability that brings new levels of automation and dependability to systems.

View more patents