Search

information technology and software

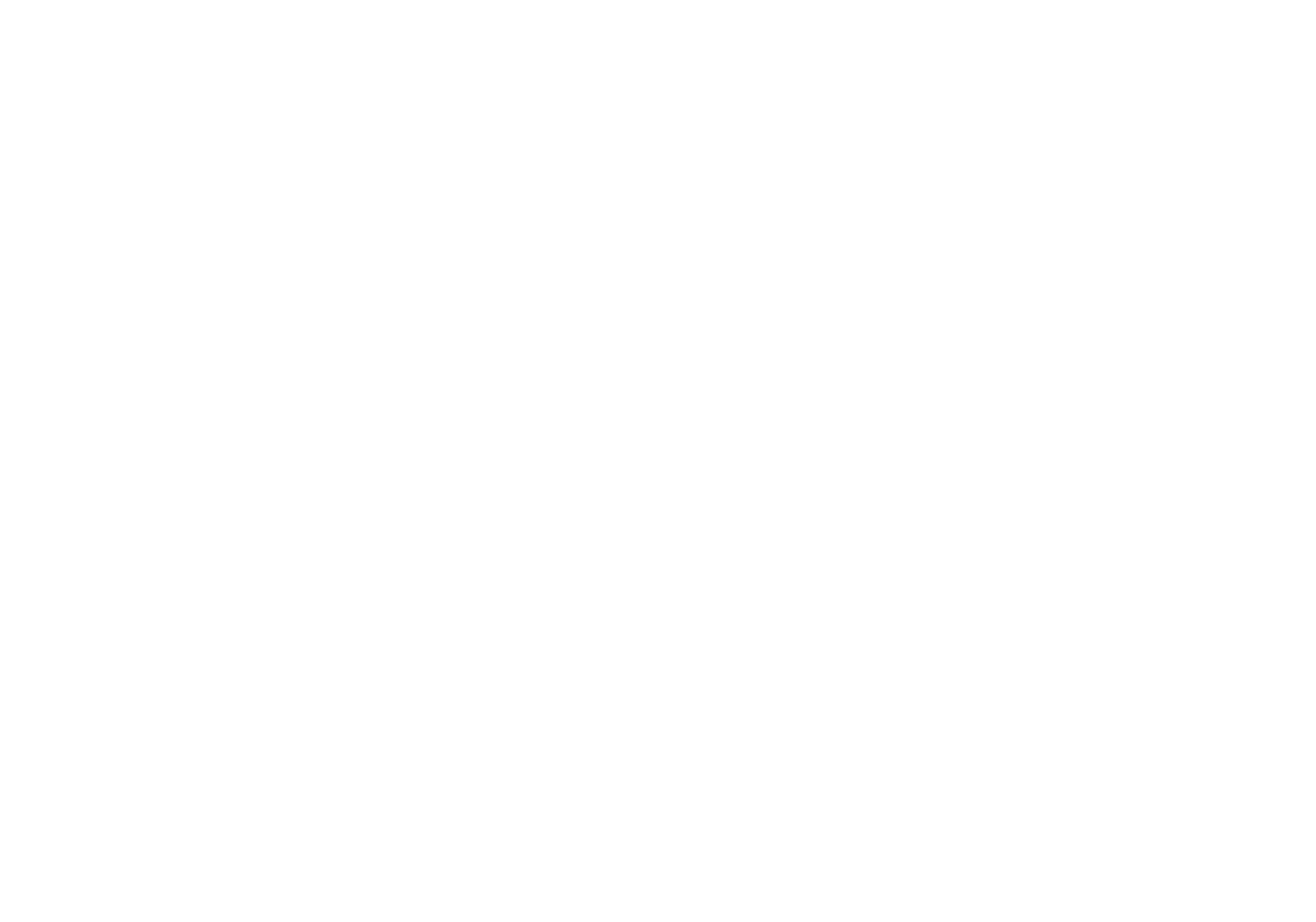

Hierarchical Image Segmentation (HSEG)

Currently, HSEG software is being used by Bartron Medical Imaging as a diagnostic tool to enhance medical imagery. Bartron Medical Imaging licensed the HSEG Technology from NASA Goddard adding color enhancement and developing MED-SEG, an FDA approved tool to help specialists interpret medical images.

HSEG is available for licensing outside of the medical field (specifically for soft-tissue analysis).

sensors

Method and Device for Biometric Verification and Identification

The advantage of using cardiac biometrics over existing methods is that heart signatures are more difficult to forge compared to other biometric devices. Iris scanners can be fooled by contact lenses and sunglasses, and a segment of the population does not have readable fingerprints due to age or working conditions. Previous electrocardiographic signals employed a single template and compared that template with new test templates by means of cross-correlation or linear-discriminant analysis.The benefit of this technology over competing cardiac biometric methods is that it is more reliable with a significant reduction in error rates. The benefit of this technology is that it creates a probabilistic model of the electrocardiographic features of a person instead of a single signal template of the average heartbeat. The probabilistic model described as Gaussian mixture model allows various modes of the feature distribution, in contrast to a template model that only characterizes a mean waveform. Another advantage is that the model uses both physiological and anatomical characterization of the heart, unlike other methods that mainly use only physiological characterization of the heart. By combining features from different leads, the heart of the person is better characterized in terms of anatomical orientation because each lead represents a different projection of the electrical vector of the heart. Thus, employing multiple electrocardiographic leads provides a better performance in subject verification or identification.

optics

Video Acuity Measurement System

The Video Acuity metric is designed to provide a unique and meaningful measurement of the quality of a video system. The automated system for measuring video acuity is based on a model of human letter recognition. The Video Acuity measurement system is comprised of a camera and associated optics and sensor, processing elements including digital compression, transmission over an electronic network, and an electronic display for viewing of the display by a human viewer. The quality of a video system impacts the ability of the human viewer to perform public safety tasks, such as reading of automobile license plates, recognition of faces, and recognition of handheld weapons. The Video Acuity metric can accurately measure the effects of sampling, blur, noise, quantization, compression, geometric distortion, and other effects. This is because it does not rely on any particular theoretical model of imaging, but simply measures the performance in a task that incorporates essential aspects of human use of video, notably recognition of patterns and objects. Because the metric is structurally identical to human visual acuity, the numbers that it yields have immediate and concrete meaning. Furthermore, they can be related to the human visual acuity needed to do the task. The Video Acuity measurement system uses different sets of optotypes and uses automated letter recognition to simulate the human observer.

information technology and software

Otoacoustic Protection In Biologically-Inspired Systems

This innovation is an autonomic method capable of transmitting a neutralizing data signal to counteract a potentially harmful signal. This otoacoustic component of an autonomic unit can render a potentially harmful incoming signal inert. For selfmanaging systems, the technology can offer a selfdefense capability that brings new levels of automation and dependability to systems.

information technology and software

Inductive Monitoring System

The Inductive Monitoring System (IMS) software provides a method of building an efficient system health monitoring software module by examining data covering the range of nominal system behavior in advance and using parameters derived from that data for the monitoring task. This software module also has the capability to adapt to the specific system being monitored by augmenting its monitoring database with initially suspect system parameter sets encountered during monitoring operations, which are later verified as nominal. While the system is offline, IMS learns nominal system behavior from archived system data sets collected from the monitored system or from accurate simulations of the system. This training phase automatically builds a model of nominal operations, and stores it in a knowledge base. The basic data structure of the IMS software algorithm is a vector of parameter values. Each vector is an ordered list of parameters collected from the monitored system by a data acquisition process. IMS then processes select data sets by formatting the data into a predefined vector format and building a knowledge base containing clusters of related value ranges for the vector parameters. In real time, IMS then monitors and displays information on the degree of deviation from nominal performance. The values collected from the monitored system for a given vector are compared to the clusters in the knowledge base. If all the values fall into or near the parameter ranges defined by one of these clusters, it is assumed to be nominal data since it matches previously observed nominal behavior. The IMS knowledge base can also be used for offline analysis of archived data.

sensors

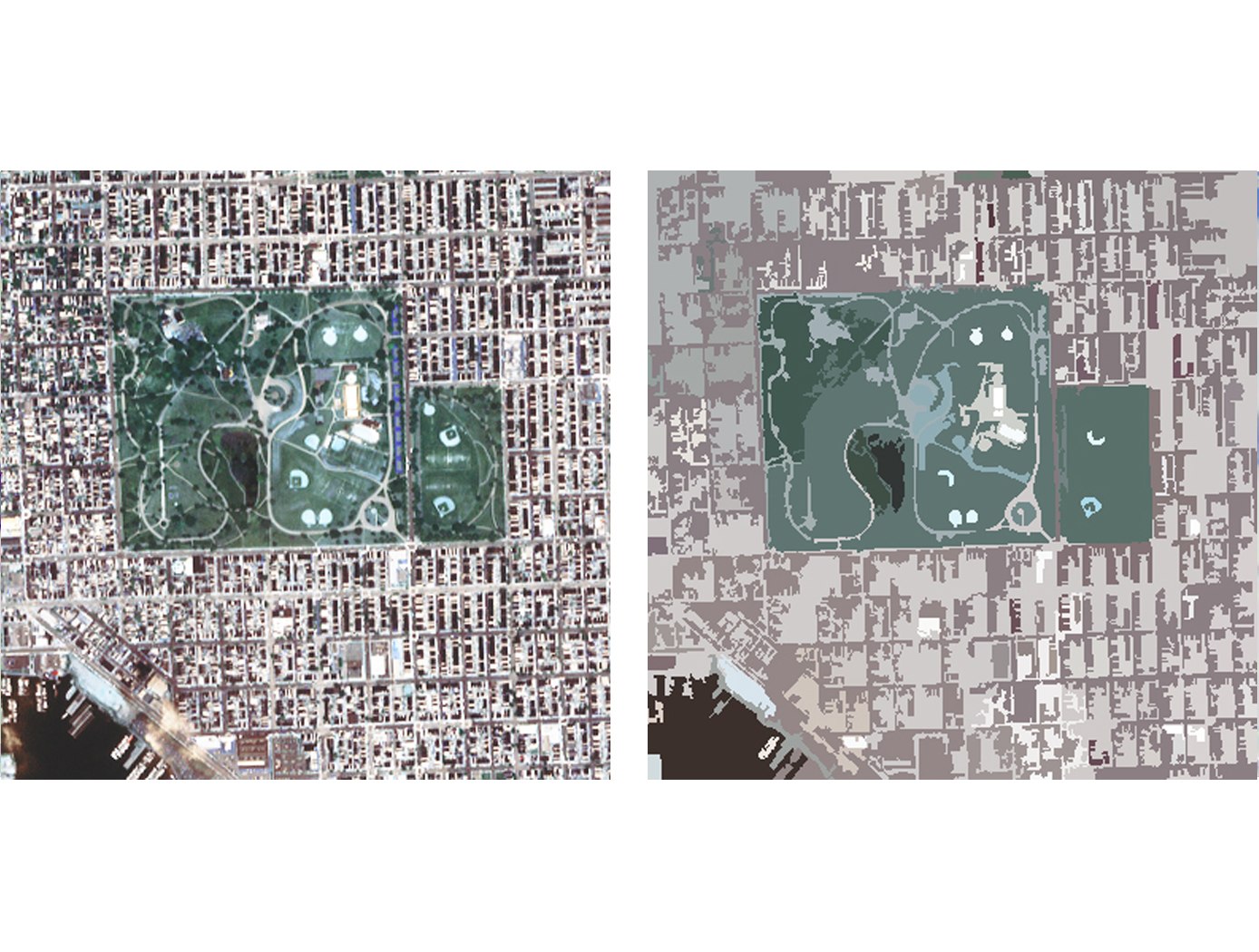

Biomarker Sensor Arrays for Microfluidics Applications

This invention provides a method and system for fabricating a biomarker sensor array by dispensing one or more entities using a precisely positioned, electrically biased nanoprobe immersed in a buffered fluid over a transparent substrate. Fine patterning of the substrate can be achieved by positioning and selectively biasing the probe in a particular region, changing the pH in a sharp, localized volume of fluid less than 100 nm in diameter, resulting in a selective processing of that region. One example of the implementation of this technique is related to Dip-Pen Nanolithography (DPN), where an Atomic Force Microscope probe can be used as a pen to write protein and DNA Aptamer inks on a transparent substrate functionalized with silane-based self-assembled monolayers. But it would be recognized that the invention has a much broader range of applicability. For example, the invention can be applied to formation of patterns using biological materials, chemical materials, metals, polymers, semiconductors, small molecules, organic and inorganic thins films, or any combination of these.

Sensors

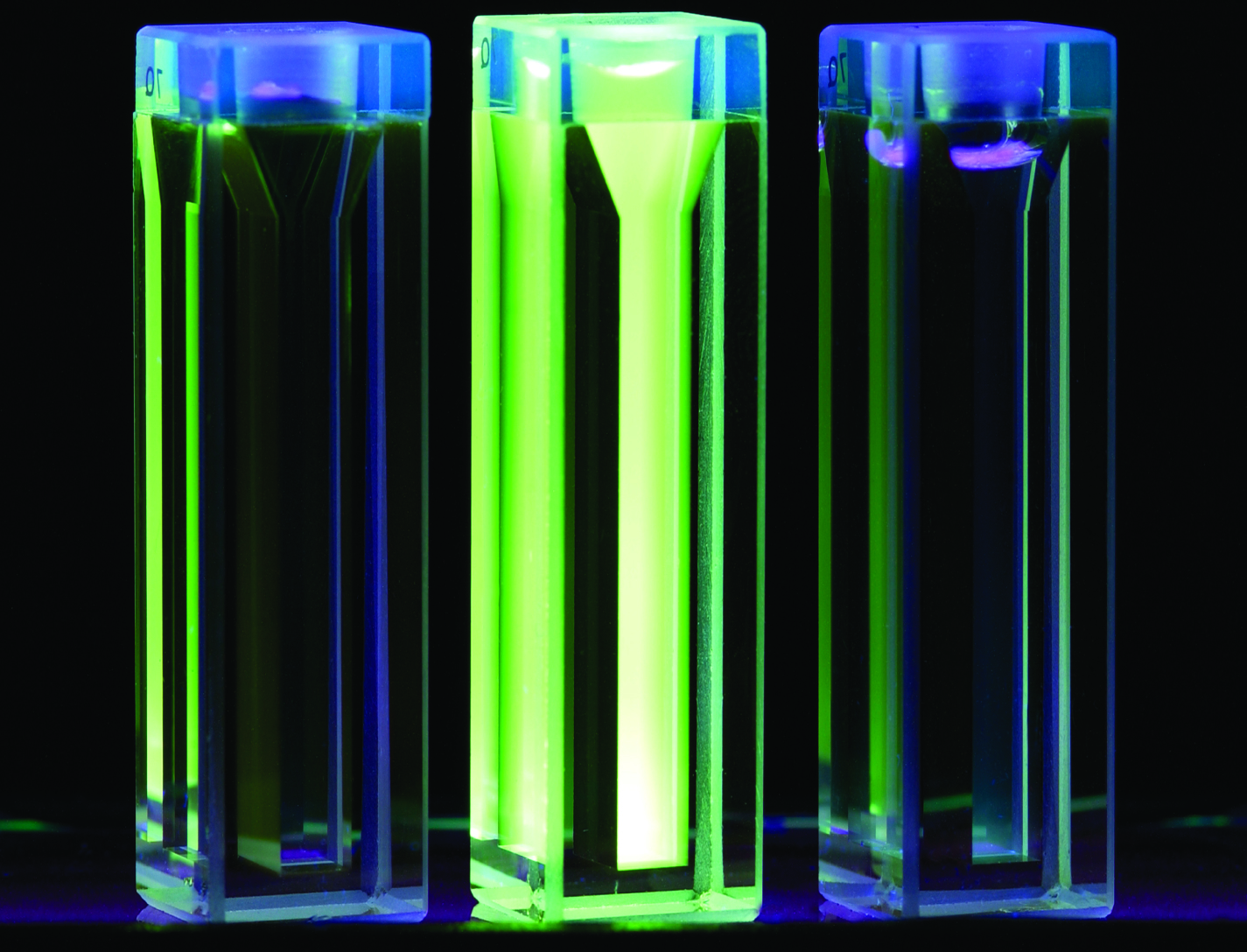

Electric Field Imaging System

The EFI imaging platform consists of a sensor array, processing equipment, and an output device. By registering voltage differences at multiple points within the sensor array, the EFI system can calculate the electrical potential at points removed from the sensor. Using techniques similar to computed tomography, the electrical potential data can be assembled into a three-dimension map of the magnitude and direction of electric fields. Since objects interact with electric fields differently based on their shape and dielectric properties, this electric field data can then be used to understand shape,

internal structure, and dielectric properties (e.g., impedance, resistance) of objects in three dimensions.

The EFI sensor can be used on its own to see electric fields or image electric fieldemitting objects near the sensor (e.g., to evaluate leakage from poorly shielded wires or casings). For evaluation of objects that do not produce an electric field, NASA has developed generator that emits a low-current, human-safe electrostatic field for snapshot evaluation of objects. Additionally, an alternative EFI system optimized to evaluate electric fields at significant distances (greater than 1 mile) is being developed for weather-related applications.

information technology and software

Automata Learning in Generation of Scenario-Based Requirements in System Development

In addition, the higher the level of abstraction that developers can work from, as is afforded through the use of scenarios to describe system behavior, the less likely that a mismatch will occur between requirements and implementation and the more likely that the system can be validated. Working from a higher level of abstraction also provides that errors in the system are more easily caught, since developers can more easily see the big picture of the system.

This technology is a technique for fully tractable code generation from requirements, which has an application in other areas such as generation and verification of scripts and procedures, generation and verification of policies for autonomic systems, and may have future applications in the areas of security and software safety. The approach accepts requirements expressed as a set of scenarios and converts them to a process based description. The more complete the set of scenarios, the better the quality of the process based description that is generated. The proposed technology using automata learning to generate possible additional scenarios can be useful in completing the description of the requirements.

communications

Smart Enclosure using RFID for Inventory Tracking

The smart enclosure innovation employs traditional RFID cavities, resonators, and filters to provide standing electromagnetic waves within the enclosed volume in order to provide a pervasive field distribution of energy. A high level of read accuracy is achieved by using the contained electromagnetic field levels within the smart enclosure. With this method, more item level tags are successfully identified compared to approaches in which the items are radiated by an incident plane wave. The use of contained electromagnetic fields reduces the cost of the tag antenna; making it cost-effective to tag smaller items.

RFID-enabled conductive enclosures have been previously developed, but did not employ specific cavity-design techniques to optimize performance within the enclosure. Also, specific cavity feed approaches provide much better distribution of fields for higher read accuracy. This technology does not restrict the enclosure surface to rectangular or cylindrical shapes; other enclosure forms can also be used. For example, the technology has been demonstrated in textiles such as duffle bags and backpacks. Potential commercial applications include inventory tracking for containers such as waste receptacles, storage containers, and conveyor belts used in grocery checkout stations.

information technology and software

The Hilbert-Huang Transform Real-Time Data Processing System

The present innovation is an engineering tool known as the HHT Data Processing System (HHTDPS). The HHTDPS allows applying the Transform, or 'T,' to a data vector in a fashion similar to the heritage FFT. It is a generic, low cost, high performance personal computer (PC) based system that implements the HHT computational algorithms in a user friendly, file driven environment. Unlike other signal processing techniques such as the Fast Fourier Transform (FFT1 and FFT2) that assume signal linearity and stationarity, the Hilbert-Huang Transform (HHT) utilizes relationships between arbitrary signals and local extrema to find the signal instantaneous spectral representation.

Using the Empirical Mode Decomposition (EMD) followed by the Hilbert Transform of the empirical decomposition data, the HHT allows spectrum analysis of nonlinear and nonstationary data by using an engineering a-posteriori data processing, based on the EMD algorithm. This results in a non-constrained decomposition of a source real value data vector into a finite set of Intrinsic Mode Functions (IMF) that can be further analyzed for spectrum interpretation by the classical Hilbert Transform.

The HHTDPS has a large variety of applications and has been used in several NASA science missions.

NASA cosmology science missions, such as Joint Dark Energy Mission (JDEM/WFIRST), carry instruments with multiple focal planes populated with many large sensor detector arrays with sensor readout electronics circuitry that must perform at extremely low noise levels.

A new methodology and implementation platform using the HHTDPS for readout noise reduction in large IR/CMOS hybrid sensors was developed at NASA Goddard Space Flight Center (GSFC). Scientists at NASA GSFC have also used the algorithm to produce the first known Hilbert-Transform based wide-field broadband data cube constructed from actual interferometric data.

Furthermore, HHT has been used to improve signal reception capability in radio frequency (RF) communications.

This NASA technology is currently available to the medical community to help in the diagnosis and prediction of syndromes that affect the brain, such as stroke, dementia, and traumatic brain injury.

The HHTDPS is available for non-exclusive and partial field of use licenses.