Super Resolution 3D Flash LIDAR

sensors

Super Resolution 3D Flash LIDAR (LAR-TOPS-168)

Real-time algorithm producing 1M pixels or greater 3D image frames

Overview

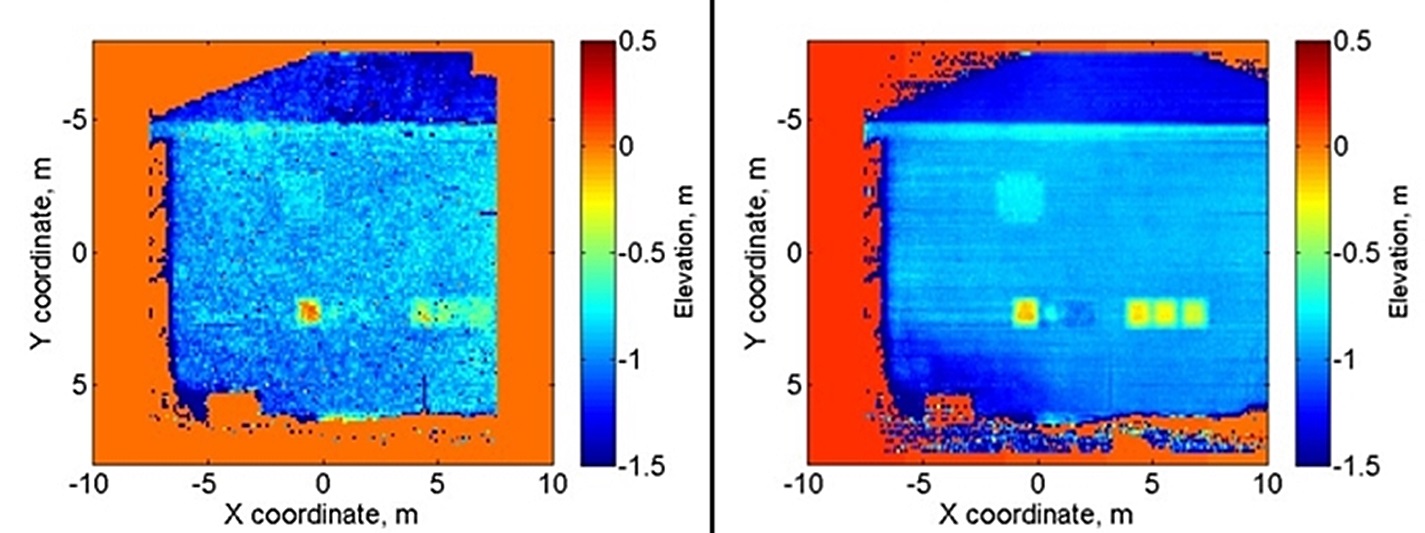

NASA Langley Research Center has developed 3-D imaging technologies (Flash LIDAR) for real-time terrain mapping and synthetic vision-based navigation. To take advantage of the information inherent in a sequence of 3-D images acquired at video rates, NASA Langley has also developed an embedded image processing algorithm that can simultaneously correct, enhance, and derive relative motion, by processing this image sequence into a high resolution 3-D synthetic image. Traditional scanning LIDAR techniques generate an image frame by raster scanning an image one laser pulse per pixel at a time, whereas Flash LIDAR acquires an image much like an ordinary camera, generating an image using a single laser pulse. The benefits of the Flash LIDAR technique and the corresponding image to image processing enable autonomous vision based guidance and control for robotic systems. The current algorithm offers up to eight times image resolution enhancement and well as a 6 degree of freedom state vector of motion in the image frame.

The Technology

This suite of technologies includes a method, algorithms, and computer processing techniques to provide for image photometric correction and resolution enhancement at video rates (30 frames per second). This 3D (2D spatial and range) resolution enhancement uses the spatial and range information contained in each image frame, in conjunction with a sequence of overlapping or persistent images, to simultaneously enhance the spatial resolution and range and photometric accuracies. In other words, the technologies allows for generating an elevation (3D) map of a targeted area (e.g., terrain) with much enhanced resolution by blending consecutive camera image frames. The degree of image resolution enhancement increases with the number of acquired frames.

Benefits

- Improved spatial resolution of 3D flash LIDAR video images by a factor of 8 times

- Provides platform relative position and attitude angles

- Desirable video processing speeds and high speed data rates

Applications

- Autonomous rover and robot guidance and control

- On-orbit inspection and servicing

- Topographical/terrain mapping

- Automotive collision avoidance, adaptive cruise control, situational awareness

- Already licensed exclusively for space, air, land and sub-aquatic vehicle navigation.

|

Tags:

|

Similar Results

Photon-Efficient Scanning LiDAR System

This new methodology selectively scans an area of interest and effectively pre-compresses the image data. Instead of using LiDAR resources to gather redundant data, only the necessary data is gathered and the redundancy can be used to fill in up-sampled data using intelligent completion algorithms. The system utilizes a unique LiDAR system to collect a pattern of specific points across a given area by modulating the incoming light, creating a pattern that can be decoded computationally to reconstruct a scene. By designing specific coding patterns, the system can strategically skip certain measurements during the scanning process to create an under-sampled image area.

The system reconstructs the under-sampled area to recreate an accurate representation of the original object or area being scanned. As a result, redundant data is prevented from being collected by reducing the number of required measurements and data condensed in post-collection to reduce power consumption. By selectively skipping certain pixels during the scan and using sophisticated recovery algorithms to reconstruct the omitted information, the system makes more efficient use of the available photons, thereby enhancing overall data collection.

This technology represents a significant advancement in LiDAR systems, offering a more useful method for data collection and processing and addresses the challenges of power consumption and data redundancy, allowing for more sustainable and effective remote sensing applications. This technology can offer advantages in applications such as mapping for construction, surveying, forestry, or farming as well as computer vision for vehicles or robotics.

FlashPose: Range and intensity image-based terrain and vehicle relative pose estimation algorithm

Flashpose is the combination of software written in C and FPGA firmware written in VHDL. It is designed to run under the Linux OS environment in an embedded system or within a custom development application on a Linux workstation. The algorithm is based on the classic Iterative Closest Point (ICP) algorithm originally proposed by Besl and McKay. Basically, the algorithm takes in a range image from a three-dimensional imager, filters and thresholds the image, and converts it to a point cloud in the Cartesian coordinate system. It then minimizes the distances between the point cloud and a model of the target at the origin of the Cartesian frame by manipulating point cloud rotation and translation. This procedure is repeated a number of times for a single image until a predefined mean square error metric is met; at this point the process repeats for a new image.

The rotation and translation operations performed on the point cloud represent an estimate of relative attitude and position, otherwise known as pose.

In addition to 6 degree of freedom (DOF) pose estimation, Flashpose also provides a range and bearing estimate relative to the sensor reference frame. This estimate is based on a simple algorithm that generates a configurable histogram of range information, and analyzes characteristics of the histogram to produce the range and bearing estimate. This can be generated quickly and provides valuable information for seeding the Flashpose ICP algorithm as well as external optical pose algorithms and relative attitude Kalman filters.

3D Lidar for Autonomous Landing Site Selection

Aerial planetary exploration spacecraft require lightweight, compact, and low power sensing systems to enable successful landing operations. The Ocellus 3D lidar meets those criteria as well as being able to withstand harsh planetary environments. Further, the new tool is based on space-qualified components and lidar technology previously developed at NASA Goddard (i.e., the Kodiak 3D lidar) as shown in the figure below.

The Ocellus 3D lidar quickly scans a near infrared laser across a planetary surface, receives that signal, and translates it into a 3D point cloud. Using a laser source, fast scanning MEMS (micro-electromechanical system)-based mirrors, and NASA-developed processing electronics, the 3D point clouds are created and converted into elevations and images onboard the craft. At ~2 km altitudes, Ocellus acts as an altimeter and at altitudes below 200 m the tool produces images and terrain maps. The produced high resolution (centimeter-scale) elevations are used by the spacecraft to assess safe landing sites.

The Ocellus 3D lidar is applicable to planetary and lunar exploration by unmanned or crewed aerial vehicles and may be adapted for assisting in-space servicing, assembly, and manufacturing operations. Beyond exploratory space missions, the new compact 3D lidar may be used for aerial navigation in the defense or commercial space sectors. The Ocellus 3D lidar is available for patent licensing.

LiDAR with Reduced-Length Linear Detector Array

The LiDAR with Reduced-Length Linear Detector Array improves upon a prior fast-wavelength-steering, time-division-multiplexing 3D imaging system with two key advancements: laser linewidth broadening to reduce speckle noise and improve the signal-to-noise ratio, and the integration of a slow-scanning mirror with wavelength-steering technology to enable 2D swath mapping capabilities. Range and velocity are measured using the time-of-flight of short laser pulses. This highly efficient LiDAR incorporates emerging technologies, including a photonic integrated circuit seed laser, a high peak-power fiber amplifier, and a linear-mode photon-sensitive detector array.

With no moving parts, the transmitter rapidly steers a single high-power laser beam across up to 2,000 resolvable footprints. Fast beam steering is achieved through an innovative high-speed wavelength-tuning technology and a single grating design that enables wavelength-to-angle dispersion while rejecting solar background for all transmitted wavelengths. To optimize receiver power and reduce data volume, sequential returns from up to 10 different tracks are time-division-multiplexed and digitized by a high-speed digitizer for surface ranging. Each track’s atmospheric return can be digitized in parallel at a lower resolution using an ultra-low-power digitizer.

Originally developed by NASA for SmallSat missions, this system’s precise and accurate observation capabilities—combined with reduced costs, size, weight, and power constraints—make it applicable to a wide range of LiDAR applications. The LiDAR with Reduced-Length Linear Detector Array is currently at Technology Readiness Level (TRL) 4 (validated in a laboratory environment) and is available for patent licensing.

Optical Head-Mounted Display System for Laser Safety Eyewear

The system combines laser goggles with an optical head-mounted display that displays a real-time video camera image of a laser beam. Users are able to visualize the laser beam while his/her eyes are protected. The system also allows for numerous additional features in the optical head mounted display such as digital zoom, overlays of additional information such as power meter data, Bluetooth wireless interface, digital overlays of beam location and others. The system is built on readily available components and can be used with existing laser eyewear. The software converts the color being observed to another color that transmits through the goggles. For example, if a red laser is being used and red-blocking glasses are worn, the software can convert red to blue, which is readily transmitted through the laser eyewear. Similarly, color video can be converted to black-and-white to transmit through the eyewear.